Drink Motivation Quiz (DMQ)

Reframing a Chatbot-only behavioural tool into a journey-led experience aligned with user readiness and organisational strategy.

Organisation: Drinkaware

Role: UX Designer

Overview

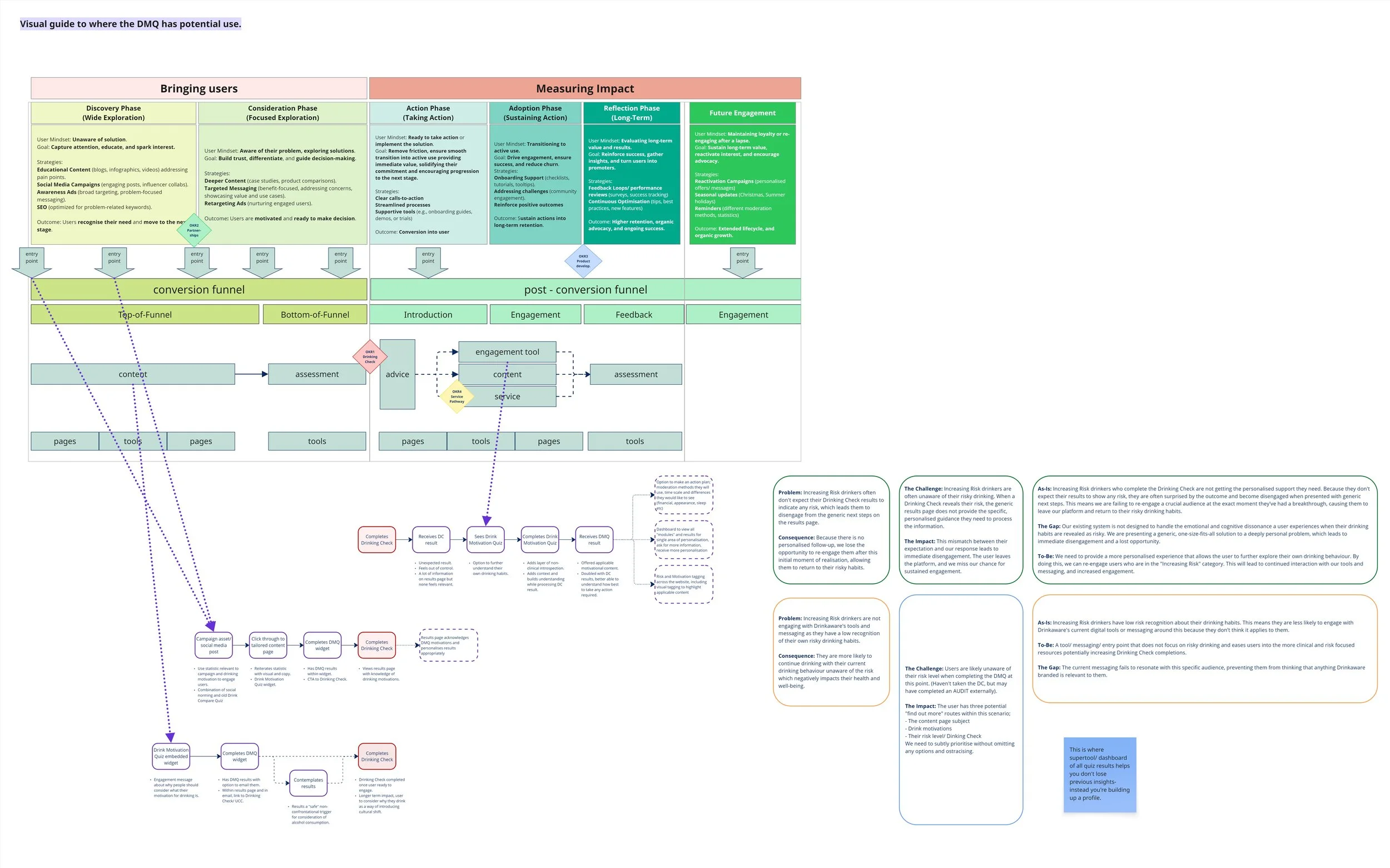

A behavioural quiz limited to Drinkaware's chatbot, with binary results that gave users little to act on, and a fundamental knowledge gap at its core that nobody had noticed. I reframed the brief, independently researched the tool's scoring framework, mapped the full user journey, and delivered strategic design recommendations approved by the Directorate and planned for implementation in 2026.

The problem

The DMQ had been running inside Drinkaware's chatbot since 2022. It engaged users, but its reach was narrow and its results were binary- users either had a motivation or they didn't. That framing conflicted with the scaled nature of the questions and left users with no clear sense of what to do next.

I started where the brief pointed me, visualising results, but quickly recognised that designing in isolation wouldn't fix the underlying issues. Without understanding where users were coming from, what the organisation needed, or how success would be measured, any solution would lack strategic grounding.

So I reframed the challenge.

A gap nobody could answer

Before I could redesign the DMQ, I needed to understand how it actually worked. When I asked how the tool was scored and what the possible outcomes were, nobody could tell me- not internally, and not the external agency who had built it. That was a significant problem for a tool asking users sensitive questions about their drinking behaviour.

Rather than waiting for an answer that wasn't coming, I researched the DMQ framework independently. I identified the different versions of the DMQ in academic literature, cross-referenced the number of questions and motivations in our existing tool, and worked out which version we were using. That research also led me to the DMQ-A, an adult-focused version with motivations that more accurately reflect our audience, which I went on to recommend as part of the redesign.

The approach

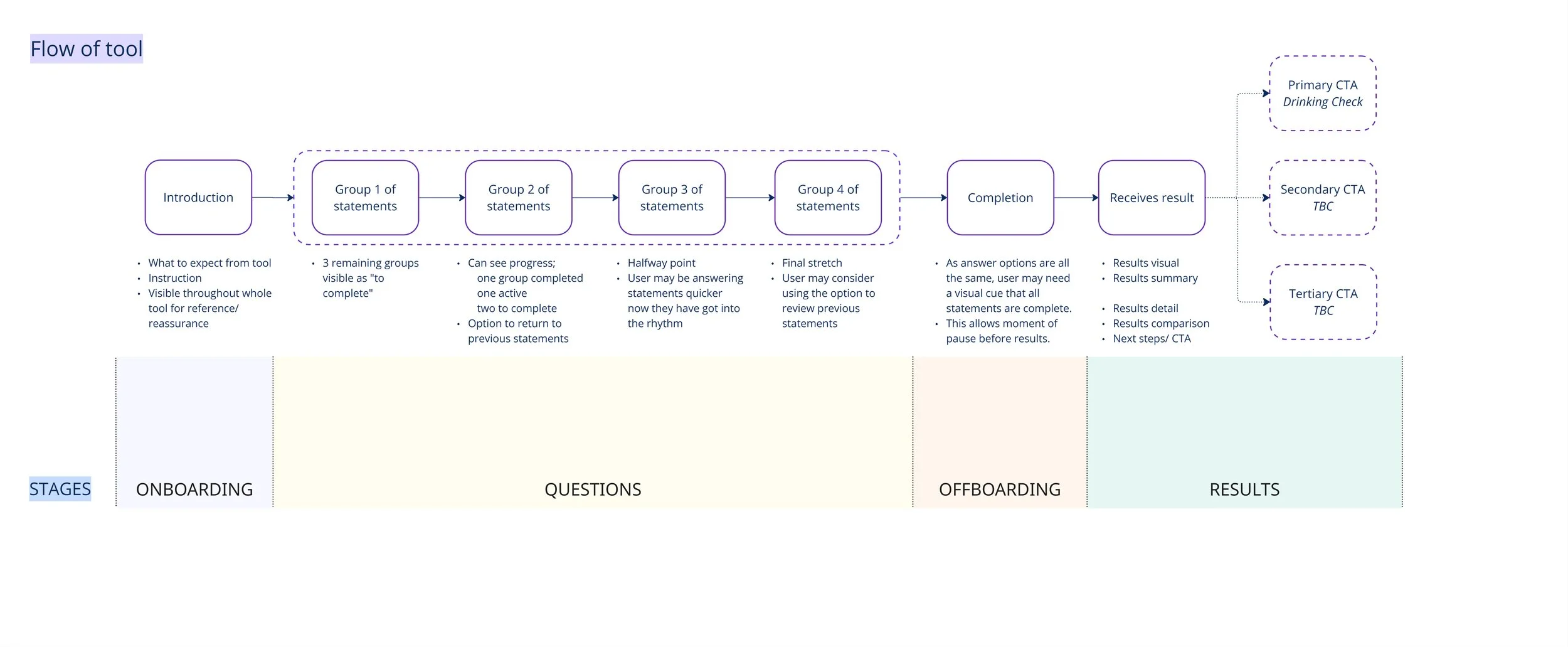

With a clearer picture of the tool's foundations, I mapped the full DMQ journey across three stages:

Onboarding

The questionnaire

Offboarding and results

I used this structure to review existing research, previous testing insights, and service blueprint data. This gave every recommendation a clear rationale tied to a specific moment in the user journey.

When Drinkaware released its 2026–31 strategy mid-project, I paused to realign the work before continuing, making sure the DMQ's aims connected to wider organisational goals and that the target audience was defined precisely enough to be useful.

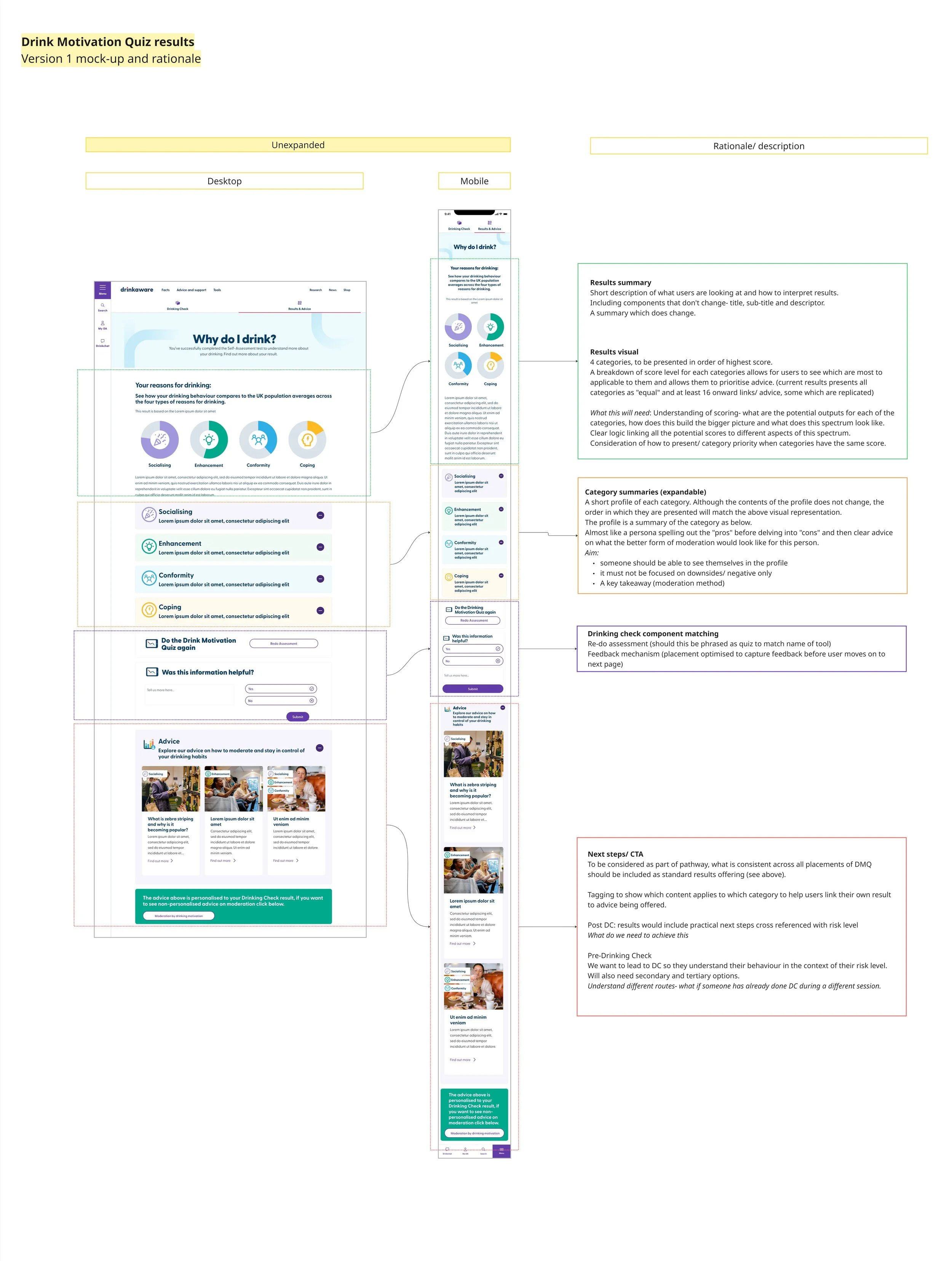

Initial results page mockup

DMQ User flow

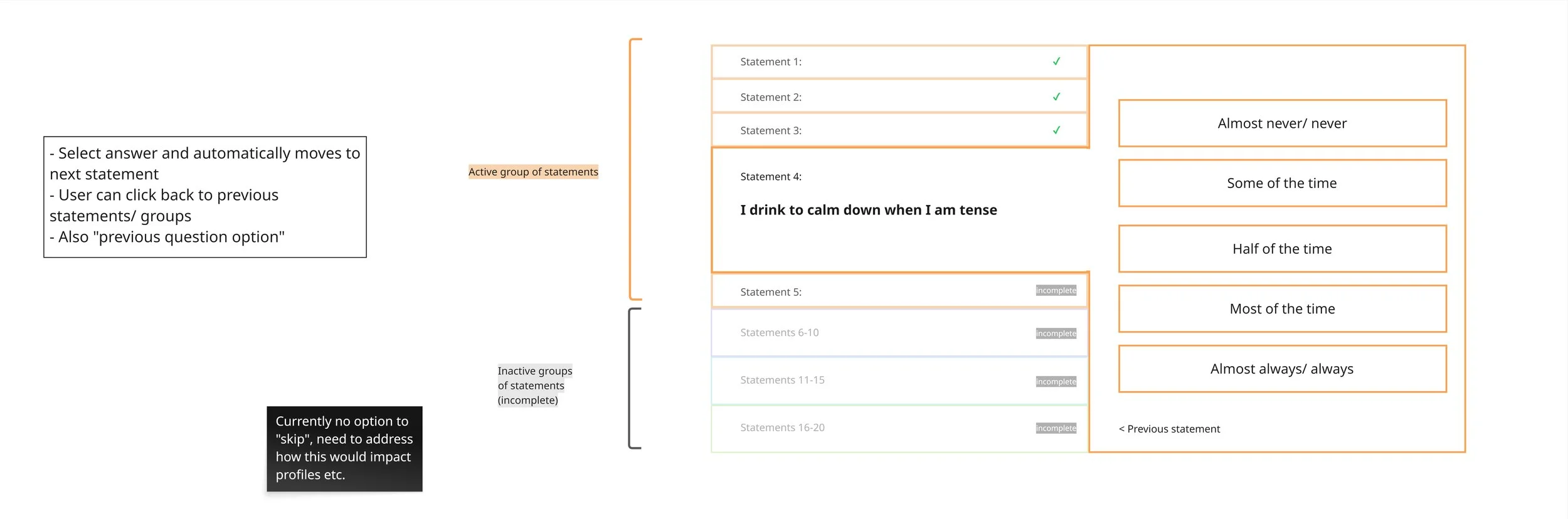

Wireframe showing the grouping of questions

Results mock-ups

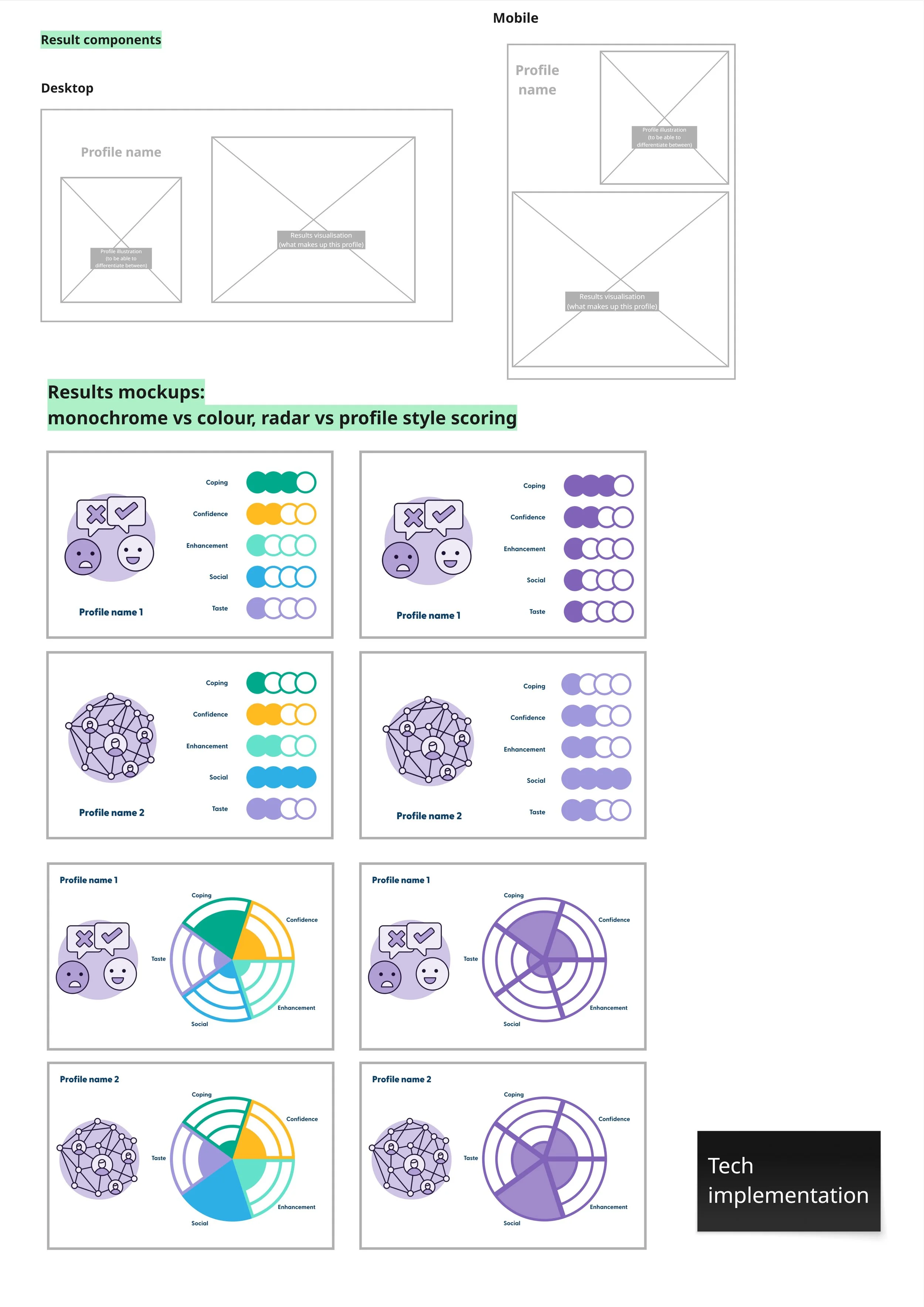

Lo-fi results visual planning

Mapping of potential DMQ use cases

What I recommended

Questionnaire:

Switch to DMQ-A, with updated motivations that better reflect the adult audience (replacing Conformity with Confidence and Taste).

Group 20 questions into four sets of five to reduce fatigue and create a sense of progress.

Enable back navigation and remove the skip option to protect scoring integrity and personalisation.

The importance of onboarding and offboarding

Treat these as critical moments, not afterthoughts. At the top of the funnel users disengage easily- onboarding needs to set expectations and build trust before a single question is asked. Offboarding should guide reflection and keep users moving forward.

Results: These should function as a gateway, not an endpoint. I recommended:

Profile-based outcomes

Grouping scores into four clear ranges so users receive a single meaningful result rather than a breakdown of individual scores.

Each profile would carry a short name and a supporting graphic, making it immediately recognisable and easy to act on.

Defining success

I defined success metrics across two dimensions before the tool goes live:

Funnel progression (click-throughs, CTA completion, onward journeys)

In-tool engagement (start rate and completion rate)

Having this framework in place means the team can evaluate impact from day one rather than retrofitting measurement later.

Next steps

Recommendations were presented to stakeholders across the Directorate, refined based on feedback, and formalised in a report to guide the next phase of the project. Implementation is planned for 2026, covering end-to-end journey design, results page wireframes, scoring model development, and user testing before handover to an external agency for build.

Reflection

The most valuable decision on this project was the early one, stopping to reframe rather than pushing ahead with a narrow brief. That shift from "how should results look?" to "what does this tool need to do, and for whom?" changed the entire scope. Independently uncovering how the tool was actually scored, something nobody in the organisation could answer, turned out to be the foundation everything else was built on. It reinforced something I've learned across several projects: designing responsibly sometimes means slowing down, and asking the questions others have stopped asking.